Research reveals what workers most want to automate through AI

Article writing dominates workplace automation, with 35 million uses of ready-made ChatGPT prompts. That’s 5x more than any other work category. New research from Paligo, analyzing AIPRM’s prompt library, reveals what’s really being automated at scale.

Since the launch of ChatGPT, over 200 million organizations worldwide have adopted generative AI for their daily work, yet most still struggle to measure tangible returns.

Although Large Language Models (LLMs) are increasingly becoming embedded in everyday work processes, companies still struggle with where to deploy them effectively, how to communicate their value, and how to ensure those investments translate into meaningful returns.

MIT research suggests 95% of companies have seen zero return on their AI investments so far. A recent Financial Times analysis of S&P 500 company filings shows that major US firms struggle to describe any concrete benefits of AI within their organizations, even after building up hype around AI solutions in their earnings reports.

The success of adoption varies widely from one organisation to the next, but it’s clear that workers are actively experimenting with AI in hopes of unlocking further time-saving and productivity gains.

And while anyone can type a prompt into an LLM interface, the surge in ready-made prompts and automation templates that appear on sites such as AIPRM shows that people aren’t only using LLMs at a surface level; they’re also trying to systematize and scale their workflows in ways that go beyond ad-hoc prompting.

But which work-related tasks are we trying to automate the most?

Our research

To discover which work-related tasks are being automated the most, the team at Paligo analysed usage data by category from tens of millions of interactions on AIPRM’s library of public Prompts and GPTs. The patterns in this dataset reveal genuine user intentions through LLMs, showing what people are deploying generative AI to support in specific parts of their workload.

Key findings

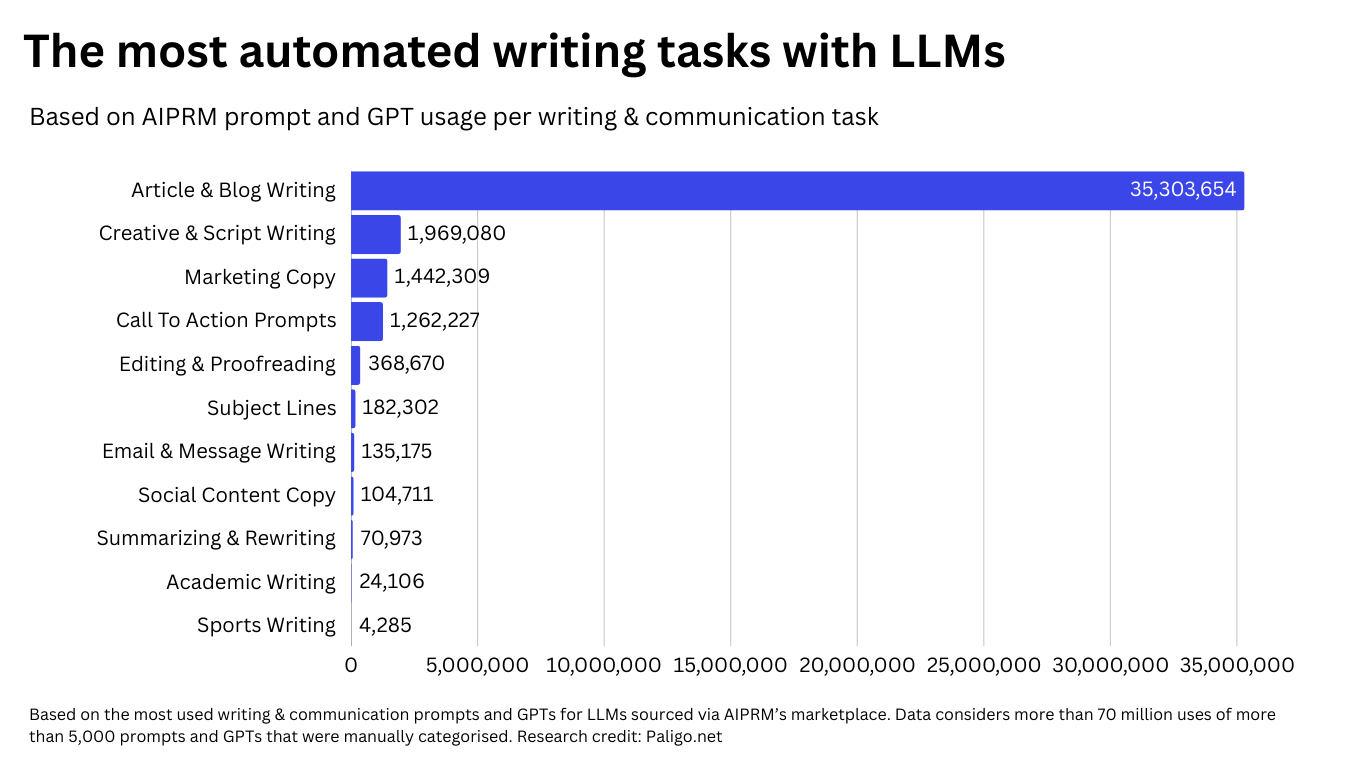

- Article and blog writing is the most in-demand task for people to automate on LLMs, with more than 35 million uses of these prompts on AIPRM.

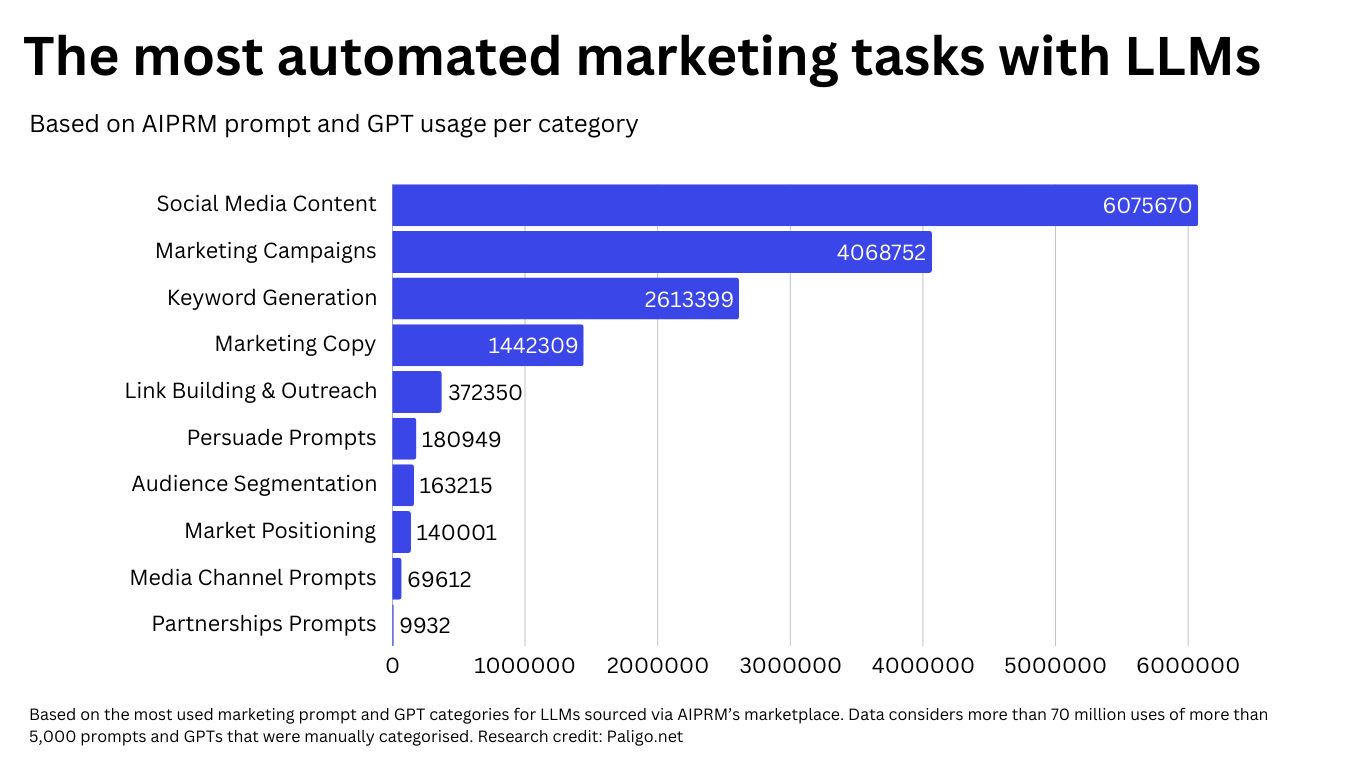

- Social media tasks (6 million uses) and marketing tasks (4 million) are the third and fourth most in-demand categories of work for automation.

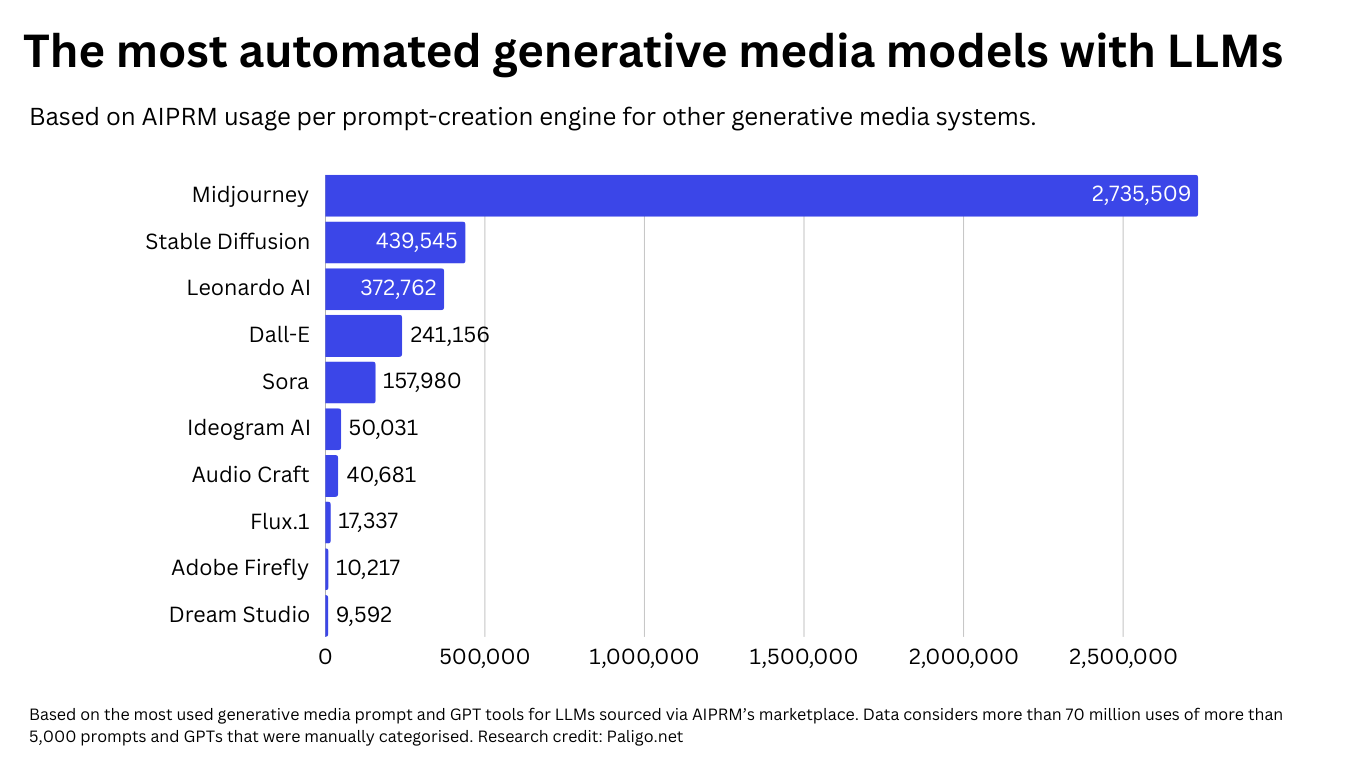

- Midjourney is the most popular image generation tool that people want to automate, with over 2.7 million uses via AIPRM’s prompts.

- The vast majority of automation demand falls into eight broad categories: writing & communication, marketing & advertising, information & research, visual & creative generation, data & analysis, planning & business strategy, software & development, and customer interaction.

35 Million uses

Article writing (47.4%) dominates workplace automation, 5x more than any other task

What type of work do people want to automate the most with LLMs?

Large Language Models, such as ChatGPT, can provide answers within seconds for simple queries and prompts that require writing or grammar-related responses.

The ease with which they can produce articles, which are suitable for a range of mediums and goals, helps to explain why writing and communication-related automation tasks are by far the most in-demand.

Ranking first for automation demand is article and blog writing (35.3 million uses). Uses of prompts and GPTs for this task are five times more common than the second most common type of task: social media automation.

While article generation is clearly the most commonly automated task, the systemic consequences of this reliance are measurable and accelerating. A recent survey by Terzo and Viz Capitalist of more than 1,700 businesses found ‘inaccuracy’ to be the top risk identified above others such as ‘job displacement’ and ‘cybersecurity’. Alongside this, companies are already rehiring positions that were previously cut in order to fix major errors in content production that AI has caused.

The most popular writing prompt within our analysis, with 1.9 million uses, is described as a humanized, SEO-optimized, long-form article writer. Overall, 760 of the 5860 prompts and GPTs in our research sample mention writing as part of their output.

The temptation for businesses to assume LLMs can produce writing at scale with just a few prompts will have quietly fueled the growing ecosystem of prompts and GPTs utilized for writing needs.

But regardless of the level of ‘humanised’ content LLMs will claim to deliver, there is a severely understated and considerably wide gap in quality, accuracy and originality between the type of writing that LLMs can produce on any scale compared to a human writer.

AI writing often amplifies biases and stereotypes that humans can recognize and avoid. It frequently produces false or misleading information because it generates text without verifying facts. LLMs don’t think or judge the way humans do, but they can apply context and follow ethical guidelines based on their training.

Writing-related tasks appear more than once in the top 10 most in-demand prompt automations, with creative & scriptwriting, as well as marketing copy, each being accessed more than 1.4 million times.

Creative and scriptwriting prompts support users in developing storylines, dialogue, and character profiles. Marketing copy is being generated to support the creation of optimised messaging across multiple marketing channels.

The most automated writing tasks with LLMs

Beyond the top three most commonly automated writing tasks that also appear in the most popular prompt list across all categories, we can see more specific use cases for writing that attract millions of users.

Call-to-action (CTA) prompts (1.2 million uses) are the third most popular for writing automation, which involve the production of short, persuasive pieces of copy designed to drive clicks, conversions, or user response on web pages and applications.

Editing and proofreading prompts see more than 360,000 users looking for a greater automation solution. This lower use case likely stems from the ease with which users of LLMs can paste existing copy straight into a chat window and request fixes directly, making specialized templates feel unnecessary.

But where LLMs struggle with longer, more complex documents, structured prompts and GPTs have more appeal under the assumption they can handle edits more consistently at scale. However, there is no evidence that the rate of hallucinations from LLMs can be eradicated regardless of the quality of a user’s prompt.

Although it only sees 24,106 uses, academic writing prompts are the tenth most popular writing task for automation. According to research from the Higher Education Policy Institute, the proportion of higher education students using generative AI to create material for assessed work increased from 54% in 2024 to 88% this year.

Issues surrounding accuracy in generative AI output do not escape the academic world either. With mounting evidence of LLMs providing advice that endangers users, OpenAI revised its policy in October 2025 to limit ChatGPT’s ability to give licensed professional advice, which includes medical or legal guidance.

A UNESCO survey of HE institutions also shows that enthusiasm is tempered by uncertainty, with more than half of respondents admitting they feel hesitant about using AI effectively in teaching or research, and one in four reporting that their institutions have already faced ethical issues, from student overreliance to authorship disputes and biased outputs.

Similarly, a 2024 study has shown that an overreliance on LLMs for students is linked to a weakening of their critical thinking, decision-making, and analytical abilities.

Social media and marketing-related tasks

Written content is the new factory line. Prompts for social media content creation were used over 6 million times. The sheer volume reflects how workplaces are under increasing pressure to produce more social-friendly assets and copy, with LLMs supporting their process.

AI-generated content has already surpassed human output in terms of volume. A study released by Graphite in October 2025 revealed 50% of new written content on the internet is likely produced by AI.

Among marketers, SurveyMonkey reveals that more than half in the sector use AI to optimize content, and over 40% rely on it to create material, brainstorm ideas, support social media strategies, and analyze data.

Further down the ranking, tasks such as link-building & outreach (372,350 uses), audience segmentation (163,215), and media channel selection (69,612) collectively paint a picture of people trying to outsource the more strategic aspects of marketing.

These activities are being used hundreds of thousands of times within our dataset. They are tasks that traditionally rely on judgment, nuance, and experimentation, yet automation is being asked to shortcut that process. Whether these tools are enhancing strategy or quietly flattening it into something generic remains uncertain. Still, the volume of usage suggests many workers are betting that AI can shoulder a significant amount of the marketing workload.

Midjourney prompts are the most popular generative media tool for automating design tasks

A large share of activity in our research shows people using LLMs as a prompt-creation engine for other generative media systems. The most common of these is Midjourney, with more than 2.7 million uses of Midjourney-focused prompt templates in our research.

2.7 Million uses

Midjourney (3.6%) is the most automated image generation tool, workers use LLMs to craft better prompts

This reflects both the popularity of the model and shows that users rely on LLMs to craft more precise or stylised instructions before pasting them into Midjourney.

One of the most widely used Midjourney helpers, DishPrompt, has over 4,700 uses and generates visually rich food image prompts from recipe descriptions. It’s estimated that over 15 billion images have been created through generative AI since 2022, and major brands now incorporate AI media generation into their major campaigns, such as Coca-Cola’s most recent Christmas TV commercials in 2024 and 2025.

Stable Diffusion (439,545 uses) and Leonardo AI (372,762) rank second and third for prompt usage. One widely used Stable Diffusion template has been run more than 50,000 times and offers five stylistic variations from a single keyword.

The decrease in popularity for platforms like Sora, Flux, and Firefly suggests that newer or more specialized systems haven’t yet built the same level of everyday utility or community momentum, even if their underlying performance is comparable to more popular models.

Audio-first tools, such as AudioCraft (40,681), rank lower in terms of usage, with generative audio use cases being more niche compared to the broader appetite for visual creation.

Other notable work tasks with high automation demand

Prompts that assist with data and analysis have been used more than 2.6 million times on AIPRM. These tasks range from modelled testing to spreadsheet cleaning and data engineering.

Tasks that require support with customer interaction, such as draft responses and CRM strategy, have collectively seen more than 1.3 million uses.

The automation of business planning and strategy has also seen over 2.3 million uses, which includes prompts and GPTs to help with startup ideas, product naming, and descriptions, as well as scaled pricing recommendations.

Generation needs are evolving, but is it improving?

The results of our analysis show that many workers aren’t just experimenting with AI anymore; they’re reorganising core tasks around it. From writing and marketing to design, research, and strategy, the demand signals mapped across AIPRM show where generative-ai is already supporting day-to-day needs.

Even while this shift accelerates, it remains crucial that generative-ai is inclusive and maintains standards of quality, ensuring it augments human work rather than replacing it with a cheaper, blander version of itself. While our research indicates demand for automation in many areas of work, the quality at which AI can complete those tasks requires more regular evaluation.

Looking ahead, the rise of agentic AI, where systems that can plan, take actions, and complete multi-step tasks autonomously signal an even greater evolution from prompting tools to true work automation. This is where AI won’t only assist our workflows but will increasingly be able to run them end-to-end.

74.3 Million uses analyzed

Spanning 56 work categories and 5,000+ prompts

Why technical documentation cannot afford to rely on LLMs

We set out to produce this research in order to raise awareness of how millions of people are relying on inaccurate, error-strewn AI models to produce or assist them with work-related tasks under the impression that the output will be comparable to that of a professionally trained human. Unfortunately for those buying into the prompt and GPT marketplace, that isn’t the case.

Like many roles, technical documentation writers demand quality and accuracy. Their responsibilities have shifted toward gathering, collating, and organizing factual, accurate information. This is ingrained in their role, and there’s a high level of trust that they deliver on that promise.

Here are more detailed reasons why LLMs can’t deliver on the needs of technical documentation writers:

- Accuracy is essential: Documentation errors impact product usage, customer success, and organizational liability. Unlike marketing copy, where approximation can suffice at times, technical content errors cascade into customer integrations, compliance violations, and support overhead. OpenAI’s October policy change, banning medical advice due to liability concerns, demonstrates that even AI providers recognize certain content domains cannot tolerate the error rates inherent in automated generation.

- Updates must cascade systematically: A single software update, regulatory change, or product modification often requires coordinated updates across hundreds of help articles, user guides, and API references. Content generated via standalone prompts lacks mechanisms to track where information is used or to ensure updates propagate correctly.

- Version control and audit trails are required: Regulated industries demand documentation showing who changed what when. Teams need to revert changes when updates introduce issues. Content generated by external AI tools lacks inherent versioning or change history. Accountability often points towards hallucinations.

- Content must be structured for reuse and AI consumption. Technical documentation follows single-source principles. The same component appears in web help, PDF manuals, in-app tooltips, and increasingly, as structured data consumed by AI agents like kapa.ai to answer customer questions. Unstructured generation creates redundancy, inconsistency, and content that AI systems cannot reliably parse.

Research methodology

Researchers at Paligo set out to discover what work tasks users of LLMs are trying to automate the most. They analyzed more than 5,000 of the most commonly used prompts and GPTs posted to AIPRM.com, a site where users share prompt templates for millions of others to use and integrate into their own ChatGPT and other generative AI workflows. They manually assigned these prompts and GPTs by their title and description into a maximum of three separate categories before summing together the usage of each category. The final analysis compares 74.3 million uses among 56 categories of work tasks. Data current as of November 2025.

For a full glossary of our AI prompt categories, click here.

—-

Our research reveals a critical gap: 35 million use-cases automate article writing through prompts. However professional documentation requires more than speed; it needs structure, quality control and governance.

Stay ahead in structured content

Get the Paligo Pulse once a month.