Back to Webinars

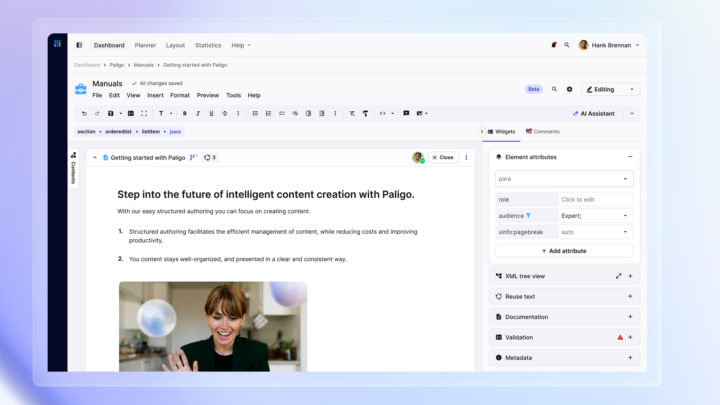

Welcome everyone. Glad to have you here. I'm Andy, marketing manager at Paligo, and I'll be hosting today's discussion. So today isn't a product demo. It's a conversation with the people shaping where Paligo's heading and why. So let's get them to introduce themselves. Rahul. Hello, everyone. Thanks for joining. I'm Rahul. I'm the CEO at Paligo. I'm fairly new at Paligo. I started in December twenty twenty six. And prior to Paligo, I have led product and technology organizations in B2B and B2C companies across six different industries for nearly two decades. Throughout my career, I have worked in leveraging technology and paradigm shifts to deliver customer centric products and experiences. Thank you. And Thommie. Nice to meet you all. I'm Thommie. I'm the VP product here at Polygon. So I've been here for around four to five years now, and I very likely know a few of you in in the audience, which is great. But yeah, even though I've been here for a long time, I'm really actually super excited on the journey we are. So I'm still keeping the energy up, and it's great to have new additions here like Raul to kind of keep us on our toes. But yeah, we have a really cool journey ahead of us and we're entering a new chapter now. So looking forward to sharing that with you. Thank you both. For those of you watching, there's a q and a window to your right where you can ask the speakers questions. We'll do our best to get to them all, either during the conversation or at the end. And for any that we don't get to, we'll follow-up directly with you. Also, who's registered, you'll get the link to this recording within the next couple of days. So, Rahul, you've described what's happening for knowledge professionals now as a printing press moment. What makes you say that? Yeah. So so, Andy, I think before I answer that question, I think, you know, I actually joined Polygon because I believe we are at a very important inflection point where the tools that create knowledge will determine whether UIS system tells a truth or make things up. And that's exactly the problem that I start I joined Polygon for. So back to your question, Annie, now, the printing press moment. Think when the printing press arrived, it didn't just make the books faster. It literally democratized knowledge and change how we as humanity processed information. We are at the exact inflection point with what I call content intelligence. For decades, technical content existed for humans to read. Manuals, for example, that what we read, right? Help centers, PDFs. And in all this, the audience was people, humans, right? Us humans. But with AI, this has changed completely. Today, the documentation isn't just for humans. It is consumed by AI agents, your chatbots, your co pilots, and also your search engines for that matter. And content is not only for humans anymore, it's also now for training data for LMs. It is the source that AI pulls from when it answers a customer question. This really changes everything. What makes it a printing press moment is a shift in who is reading your content, not only humans. It's no longer optional for your content to be structured, governed, and machine readable. If not, we know that your AI systems will hallucinate. They give wrong answers with complete confidence, and we have seen this many times. Again, I just wanted to bring some data here. Multiple industry reports have shown that roughly eighty percent of enterprise AI implementation, they remain experimental today. That means they really struggle at the last mile. With sixty seven percent of the failures are attributed to content issues rather than the model limitation or the compute power limitation. This is why I think we are at a very pivotal moment. This is what I call printing press moment. So at Paligo, you've both talked a lot about structured content being the missing layer in enterprise AI. Rahul, can you explain what that means in practice? Yeah, absolutely, Andy. And I think so we have been at least in Paliga over the last couple of months now, we have been discussing this at length. Right? And we generally believe that the enterprise AI is really missing that layer that we talked about. So let me start with this, right? Every enterprise is building AI right now. We know that, right? I'm sure that many of you who has joined today to watch this webinar, I'm sure that you are also grappling with implementing AI. But we also know that they are buying All of you are buying AI agentic platforms, which is connecting the tools that you already have. And then also you're rolling out copilots, right? But we really believe that there is a missing layer and I'm gonna talk about that now. So you have the AI platforms that you buy or the agentic platforms that you buy today, which sits on top of your model layer, right? Which is basically your LLM layer. And these agentic platforms now, for example, OpenAI has released something called OpenAI Frontier, Anthropic CoWork, for example, or Salesforce Agent Force, for example. We believe that layer is already solved because there are a lot of players out there solving for that. Now comes the next layer. Basically where you have the tool integration layer in the middle. And this integration layer could be the layer that really fetches your data. It could be Salesforce, it could be your HRM system, your data warehouses, your ticketing system. We also believe that layer is also solved. But then underneath that, there is actually a layer where your AI agent needs to know the real things. Because remember, your agent reads on content. They need to pull that product information, that policy, that procedure, that business logic, the business knowledge, the workflow, and also technical and product documentation. We call that layer the knowledge layer. And here's the problem. Most enterprises have the knowledge layer scattered across different silos, whether it's in your SharePoint, your Wiki, your knowledge portal, email threads, and different tools and different folders. We know that it is unstructured. We know it is ungoverned. And we also know it's untrustworthy because of that. And we know that you cannot connect all the tools you want because of that, because your knowledge is sitting in chaos. And if you do that, actually, you have just automated bad decision at a machine speed. Structured content means content that knows what it is. It is a semantic markup and it is version controlled. It is governed and it is single sourced. And that exactly is the missing layer that I talk about. Right? And that is what Paligo provides and will be providing as we move further in this journey. In essence, we will provide the structured truth layer for enterprise AI. And as I said, as shown in this slide, the missing layer of structured truth has structure, governance, version control, single sourcing and ready for AI. There's this idea that AI agents need agency. Can you walk us through this train of thought? No, absolutely. So I think this is a very important narrative, I think. Let me start from the top, right? AI agents, if we really want agent to be fully functional to the true sense, so not only they predict, but they also act on our behalf, just like us as human who has agency, we need to provide agency to agents. Right? So that's one thing. Now, what does it us also as a human? What provides us agency? Actually, agency to provide agency, it requires control, meaning that you need to really give control. And we know also as a human, if we trust someone, we give control. Same goes for agent. So what we need to do is that we need to make sure that trust provides control. And then we know that how do we will trust? People who are credible, people who are accurate, people who are trustworthy, we give control to them. We give trust to them. So that means that especially in the world of agentics and AI, trust requires accuracy. And we generally believe that especially in the content world, in the world that we all come from, accuracy requires structured content. Meaning that if you don't have structured content, if you have your content sitting in silo, as I mentioned earlier, you will not be able to give You'll not to have accurate system. And then finally, think, which is equally important is that we really believe that for structured content, you need structured truth. Not only the content that is structured, but also governed, which is super important. And that's what Polygon is gonna provide, the structured truth layer for enterprise AI. So a question for both of you then, and I'll start with you, Tommy, maybe. Before we get into what we're shipping, what are you hearing from content teams right now, and what kind of pressure are they under? Yeah. So I think, honestly, lot of companies that we talk to, they have content strategies already, and they had those maybe for several years. And solid processes, rituals to cover for edge cases and all of this as well, but what's happening now is so profound. It's like the whole playing field is actually changing and the strategies might not be actually ready yet. So I think there's a lot of players out there and the majority actually who hasn't really adopted to this yet. Some might not even have a plan to be honest. So this is not a little shift, it's a whole playing field changing actually. Yeah, absolutely. And I think Tommy, you have a very good point there, right? And as you know, Tommy, I have been talking to a lot of our customers. Right? A lot of our users over the last couple of months now. Right? When I talk to documentation managers, technical writers, documentation leader, I think it's very, very clear. I think the pressure that you talk about, Andy, I think it's real. And and I think, you know, let's not hide it. Right? I think it's it's clear there is this also this existential question that comes up. Will AI replace technical writing and tech and thereby technical writing? I would say that let's not fool ourselves. I think I still believe the art of technical writing and and making sure that the content is created in a trustworthy way and it is governed and it is actually have human oversight, I think it's gonna be very, very important for us. Our role also with the work that we are doing with Paligo, we really want to make sure that the user of our product becomes a hero in this. Meaning that today, maybe you are tech writers, probably in the future you're gonna be content curators, content orchestrator, or content directors. That's what we see, but the pressure is there. And the second thing which I also wanted to mention, which I also hear in some of my customer interviews that I have, is there is also this because of AI, there is this efficiency squeeze, I call, right? Meaning that companies want more output with fewer resources And plus these companies are growing, they want more languages, more channels, more products and faster updates. So yes, I think there is this thing where there is this natural assumption that AI is gonna solve all of this, right? So I think, and that also puts a lot of pressure on our users today. And I can guarantee you what Tommy is gonna all to talk about today. We are actually addressing more of that already today with what Tommy is gonna talk about. And just a reminder to the audience, of course, if you have any questions, please pop them in the q and a, and we will we'll get to them when we can. So, Tommy, Paligo's latest release has four features. What is the thread that connects these? Yeah. So actually, Andy, first, let me just say that I think I'm really, really proud of this release. Actually, is yeah. This is so exciting. I think this release actually deliberately touches on the full content life cycle, right? You can see it starts with the content creation, then you have content structure, translating it and also publishing, right? So I will get to the thread, but I just wanna put that out there. I think this is a really big release for us and yeah, I'm really happy to see what the audience has to say about it as well and get your input on this. So as for the thread, I think I'm gonna tie this actually to what Rahul actually talked about before. He talked about how important structured content is for reliable AI. And with that, our goal is to take structured authoring and make it accessible for everyone and basically democratizing it. And that is a thread that goes through across this release from the beginning to the end. And I think we really wanna get to a place getting away from, we're not there yet, but structured authoring has in the past been a very much a specialist tool, if you will. And we really wanna take this into platform that any team can use, right? Because the more people that can contribute to structured content, the stronger the AI foundation will become. This release is a big step for us. Yeah. For me, I think just to add to what Tommy mentioned, Adi. For me, the thread that connects all of them is I think this release, if I put it, it will make structured content easier to create and thereby also making it more valuable to consume on the other side. So idea here is, as Tommy mentioned earlier, we would really like to simplify and democratize structure offering. And this release is a testimony to that and we're gonna talk more about it. So let's start with the next gen editor. Tommy, why create a completely new editor in the first place? Yeah. I mean, already had one obviously, right? Now we have two. Two is more the merrier. No. But honestly, the existing editor is very powerful. We have trained professional who's really productive in it, right? So there's not a real problem in it per se, but we are noticing that the learning curve is higher for, let's say, new users who isn't really used to structured authoring. So for first time users, it's a little bit more of a barrier to pick it up. So you could say the reason for building it and the main goal is to create a platform that kind of gives the simplicity and innovation of a modern tech editor, a modern doc editor, you will, but then also bring the power of structured content. Combine those two. That's the real reason for for building a new editor. Yeah. And I think, Tommy, I have a follow-up question for you. But before I say that, I think I also have one thing to add there. Right? Remember I mentioned earlier that the you know, our attempt with this release is to simplify and democratize structure offering. And next gen editor that we are that we are launching today is actually a testimony to that, Meaning that we will simplify structure offering so that it has become usable by more than only tech writers. Right? Because we really see in in your organizations, we see more users, you know, should be able to leverage these to, like, Paligo, right, which is which is, you know so giving users a simple interface that they are normally used to. But then at least on the back of it, still have all these superpowers of a DocBook XML structure that we talk about. And then the question for you, Tommy, is that I understand it's still in beta. Right? When can everyone expect to get their hands on it? Yeah. So we get this question a lot. I think very soon, I'd say one and a half to two months is very likely, but give you a little bit more background to it. We kept it beta and a closed one so we can work very close to the users for this. So it's been very important actually since the editor is the heart of the tool. It's very important that we work close with our users so we can act on the feedback that we receive, which is why we kind of kept a little bit fairly low usage. But we are now actually already next month working on a really, really cool update that I'm really excited about. It's gonna be a really big, let's say UX improvements. So kinda take it to the next level, if you will. And I think once we have that out there, I think we're ready to open up the doors for everyone to come in and give their opinion on this. So yes, one and a half to two months. And actually on the topic, I do actually wanna take the opportunity to thank you out there, the listeners who actually are participating in the beta, because we've gotten some really, really good feedback and we've been able to bounce ideas with you. So what we are building now is actually very much heavily influenced by your input. If you are there beta testers, yeah, thank you. So you've also built an AI assistant directly into the editor. Why do that rather than letting teams use their own AI tools? Yeah. I think that's a good one. Obviously, everyone is using some form of external AI nowadays, but we've seen that for a lot of these teams, what they're actually ending up doing is they're breaking the workflow rather than helping it. And I'll elaborate a little bit what I mean with that. So when you draft Inchef TBT or in them paste results into Polygo, you get the speed of an AI, but you actually lose, let's say the structural context, right? So the output that you get is just raw text and you have to manually format it, tag it and clean it up. So it's actually structured content. And so in a way you do save time in one place, but you actually create a little bit of rework in another. So that's why we wanted to have, it's all built in context, right? Because our AI in a system now would actually output structural content directly. So you could actually feed it pure text because a lot of track writers job is chasing down the engineers, building it, getting information so they can actually describe how the functionality works. And they can just get pure text, paste it in and have it create a step by step procedure, It's actually convenience saving a lot of time with this. Yeah. And also to add to to what what Tommy mentioned, I think we generally believe that we you know, for any AI features on our product, our core belief is that we will make it more context aware rather than bolting on AI on top of the product and making probably not as relevant as possible. That's why when you look at our, know, AI assistant and and and all and all the features you that Tommy is gonna talk about, it's very much in context of where you are in the workflow of your content journey. And it's extremely important for us. So context aware AI is gonna you're gonna hear me and Tommy talk more and more about it simply because we don't believe in bolting on AI features on top of the product and no one uses it. Rather, we'd like to have context aware AI where many of you use it in your regular workflows. So, I'm gonna interrupt just slightly here. We have a very lovely comment from Caitlin saying this isn't a question, but just wanted to say thank you, Tommy and your team. They've been using the beta extensively and are beyond impressed with your response and development effort and a massive kudos and congrats to the team on this. So thank you so much for the kind comment, Kaelin. We actually do have two questions from the audience if you guys would like to jump in on them. Was that okay? Yeah, bring it up. All right. So first one from Caitlin, same lady who gives lovely feedback. This discussion around pressure lines up perfectly with our experience. Is Paligo looking into adding AI features to help manage content like identifying unused images, topics, taxonomies, using AI to make recommendations on reusing content, etcetera? Yes. Very much so. Right? I can see you smiling there all. But, yeah, this is on the roadmap. We actually started a little bit of this work already, which not all you see here, but some of this we're actually already working on. So yes, this is very much our ambition. And I think this relates to what Rahul said, having the AI basically in context, helping you where you are in the system. It doesn't feel like a separate tool, but if you are working with, in some context, it should be helpful at that place. So yes, we are, for example, I can give you a little bit of hint here. We are looking to finding duplicates topics that maybe describe the same thing, even though you're not using the same words, can it help you narrowing down and improve your reuse and get a little bit more of the single sourcing out there. So yes, we're looking at this. Thank you. And let me just get the second question. So this one is from Annie. So we've two similar questions here, but essentially will we have an integration with Cloud Code? Not initially, but I think, you know, as time goes, we're very much open to making it, you know, model agnostic. But right now, initially, we are working with OpenAI. Right? But that doesn't define the future, so very much open to that. Yeah. And also just to add to what I think the question is also, one thing is the entropic model that Tommy you talked about. Right? Especially, you know, the Opus and the Sonnets of the world, which is part of what Tommy mentioned about. And then integration with Cloud Code is very much about integration with the tool side of it. So I think it's a really great question, Annie. And I think if we start to see that there is a more pull coming from users like yourself, absolutely we will look at it. There is no doubt about it. I think you're gonna see us that we're gonna evolve the product quite significantly as we move on, but we also wanna make sure that we do the things that you really want and you really appreciate because this will help elevating some pain at your side. Right? So so I think everything is up on the table for sure. Alrighty. So we'll we'll carry on. And again, if I come across some good questions, I'll jump in with them. So, Tommy, next, translation has always been a major bottleneck. How does Polygos new AI translations change this process? Yeah, so translations has been one of the, I guess the biggest pains, honestly, the teams that I work with. And the process itself is the reason. All of us care about time to market. I mean, all of us want to be proactive, but the sad reality is that, you have something ready, it needs to get out there, everything needs to be done ASAP, right? And the process of exporting content, brief a language service provider, you wait maybe even weeks to get it back. Then you get it back, you import it, you review it. The process itself is pretty long and can take a lot of time. We wanted to actually to simplify this a lot and make it quicker. So we now have the process fully available inside of Polygo. So the content doesn't really need to be sent away to external providers. You can do it directly in Polygo. So you select the language you wanna translate to, you just kick off the translation and then you have it ready within minutes, right? So I think it's a really nice convenience and efficiency game and a lot of questions. I haven't looked into chat, they might be out there. I'll say it anyway practically, right? Because I think it's gonna come. A lot of you have glossaries, right? But we thought about that as well. So if you have one, you can actually import it into Polygo or just create a new glossary in Polygo because I know that's important for a lot of people. All right, so we actually have the perfect question I was gonna ask you anyways. I might as well involve the audience. What AI is behind the AI translations? Is it deeper? Yes. It's a quick answer. Yeah. But we wanted something that is already out there and we know gives good results. Right? Because the biggest caveat to translation is the quality, right? We need to be able to enforce that and we know this is a good player that has good quality for that. So that's why we wanted to partner with a good brand for this. Yeah, and also just to add Tommy there, I think, of course had options to use, you know, the basic, you know, translate like Google Translate or any LLM for that matter. But we thought that we will go and partner with with a company who actually are deep into deep into translation and who actually understand the domain so that so so that the translation is of high quality. That's why we have chosen to go with Deepa. Alright. So I'm just going to check for any other AI or translations to the questions. I'll There is one question, I see this one there and it's actually on the glossaries that I spoke about. So just gonna address this. So the question is, can we import glossaries into Polyglot? So yes, you can import them. Basically you just do a CSV file and you can import it. And if you don't have one, you can create them directly in Polyglot as well. So yes, you can do that. Okay. Question answered. So the fourth feature then, markdown publishing might surprise some people. Can you explain what it unlocks, especially for teams thinking about AI? Yeah, I think in one way it might surprise, but in others, I don't think it should. It's actually one of our heavily requested features that we have. We have a few and this is definitely top up. I mean, the use case before has been relevant. You have developer tools and static site generators, but with the influx of AI, the need for this or the use cases has really spiked. A few years ago, you published your HTML and PDFs, maybe a help center portal, but today your content needs to feed into AI chatbots or rag pipelines and all of these systems, they work great with markdown. So I think for us, this was a no brainer. It was a highly requested one and the trend is out there. This just adds a lot more value now. So no, not a big surprise in that sense. No, I think also Tommy, I think this is one of the other feature that I'm very excited about. Right? Essentially, it's this is your structured, governed, version control content that flows directly into the format AI consumes. Right? This is the whole markdown publishing is all about. So I have a question for you, Tommy, well. Think so could you say that this is a way to get more value out of your structured content? Yeah. That's exactly it. Right? Because if you think about it, the content that you already invested time, resources into creating that you're already using for PDF, HTML, it's the same content that you actually published to markdown. So you actually getting more return for the investment you already made. You're getting more value of the content you spent time creating. So that's definitely the case. And it's also obviously a convenience because you could already in some way convert HTML to markdown, but HTML isn't really perfect for that. It carries a lot of visual baggage like styling and layout instructions. But I think as a final comment on this, I think this is also really important to maybe circle back and close the circle here because you in the beginning role, you talked about the missing layer And this is the first step towards closing that, right? Now you can actually leverage your structured content directly into AI pipelines. So this is the first step. Yeah. And I remember, Tommy, actually, that when I started, I had many conversations with our customers and users. This is one of the many, you know, features that they've requested for that time. So I'm really happy that that you and the team and the product engineering team actually has delivered this, which is super exciting. We have a question from Mike about this feature. So he's asking, doesn't markdown dumb down the semantic mark above the XML source of the topics? Right. Should I take it? Yes. Go. Yeah. So I think, Mike, actually it's a great question, by the way. Right? So, yes, actually, if you look into if you try to compare the semantic depth of DocBook XMup And if you publish to a markdown, yes, it does dumb down that semantic richness, the contextual richness that we talk about. So it's exactly You're absolutely right, there are still ways to actually preserve some of it, not all of it. This is also why when we say the missing layer that I mentioned earlier, I think our intent with structured truth for enterprise AI that we are talking about is to make sure that we still preserve the semantic richness of DocBook XML and how can we expose it all the way on the other side on video agentic workflows. And I don't want to give it more than that, but I would like stay tuned for this. I think this is something that we are really deeply care about. So yes, markdown publishing is here to stay, but we will do even better than that to make sure that you actually preserve the semantic richness that you talked about. Alright. Thank you, Mike. So last question for you both. For someone listening today, whether they're a current customer or evaluating platforms, what would you like them to walk away with? You wanna start or should I? You can go ahead, Tommy. Okay. Now I think the biggest change that I think is, there's a wave of change coming whether we like it or not. And I think by having good structure, you are future proofing yourself. And it's so clear, like you can take Google search, it's such a fundamental thing, but if you go and Google something now, what's the top thing that comes, right? It's an AI answer. I'm personally not spending time scrolling through the results. So in a sense, what that mean is AI is the one that consumes our content, right? So this is a big shift in how everything works. I even tried to ask ChatTPT how to publish to one of Polygos integrations. And the answer I got was actually really good because it pulled out all the information that I needed. It pulled out how to set up the integration and then also how to publish to it, right? Because that's something that I would need to go to multiple places maybe to find that information. So the shift is real and it's already here. Google changes the landing page for your search, right, you know that something is happening. So I think this is is, I think, the biggest change that my takeaway for this. Yeah. No. I I agree, Tommy. And I think so what I would like to say in this is if I you know, out of this webinar, if I want you to take one thing out of it is let me try to summarize this. Right? So I think I'll start with here. Right? You cannot retrospectively structure enterprise content perfectly using AI alone. Right? We know that. Right? Anything you do downstream retro retroactively is gonna help us. Right? Because we know that the language model hallucinates because they infer relationship that don't exist and they lack they lack business context. The only reliable path to AI ready knowledge, we generally believe, is structure and source. That means structure creating structure at the point of creation of the content. And we also know that every enterprise that is deploying AI, I think the question is, will that AI tell the truth or will it make things up? And I think the answer depends on what lies underneath. If your AI is governed, structured, version controlled, that's so that it can be trusted. If it's pulling from a Word doc or an outdated Wiki or a SharePoint, I can tell you it will confidently give wrong answers. The race, I think, isn't about who gets the best agents or get the best AI models. It's about who has the best foundation, the content foundation, the mission critical content infrastructure. While enterprise are debating which agent platform to buy, I actually believe I generally, generally believe the buyer you know, the winners are building the knowledge foundation, the content infrastructure foundation that will make their agent work. I actually believe Polygo is their foundation. We are building that structured truth layer for enterprise AI. So if you are already a current customer of us, I think this release already proves that point that that we will help you to create better content faster, translate it efficiently, and connect through AI workflow through markdown publishing. And if you are one who is evaluating content creation platform like Paligo, ask yourself, is this tool preparing my content for the AI future that is upon us? Or it is just yet another place where you create and store documents? I will leave the answer to you. A strong question to end on. And we do have quite a a list from the watchers. So we have a a hard stop at the hour. So what we're gonna do is go through as many as we can, and anyone we don't get to will follow-up with you, over email afterwards. So if you guys are are ready, I'm gonna start powering through them, and we'll start from from the top. The barrier that I need to overcome at my organization is to convince my administration board to change to a structured authoring approach. What arguments do I have to back a change like this? Joao, I mean, this is such a great question and I would love to hear directly from you. So feel free to reach out to me because I can tell you this is one of the things that we are extremely passionate about. How to have that conversation, right? I think the moment you start to think about structure authoring alone, very soon, as I mentioned in my earlier discussion we had at the start of the webinar, you start becoming a discussion of an efficiency gain because everyone thinks that AI is this magic superpower. It will come in and it will make all of us efficient. By the way, that's true as well, right? But I think the real conversation should be about the return of investment of structured authoring and beyond only creating, managing, and publishing documents, and even hosting document. If you think about your content workflow all the way, I think internally we call it from ingestion to consumption. And consumption can happen by humans, but also by agent. If you think about that, and if you think about that your best AI, main prerequisite for you to have elucidation free, trustworthy AI is structured content written through structured authoring. The conversation with your board or your decision maker changes completely because then all of a sudden, this discussion is not about a point tool which creates content, but it's about creating a mission critical infrastructure which really make your enterprise AI successful. And that conversation needs to happen. And I can just tell you, we will also enable you to having such discussion. So if you're struggling for that discussion, please reach out to any of us and I will be happy to jump on a call and actually discuss more because this is some of the things that we are very passionate about and we are working very hard to actually set those conversations for you in your organizations as well. Okay, so Joao, our people will be happy to talk to your people. Hope to see you again. Next question then was from our customer, Susan. Will Political support dynamic publishing where a manual is generated based on a customer's selected product or feature options? So they only receive the content relevant to their specific configuration. If yes, what is the recommended way to set this up? So in a way, I think we already do this right with profiling and variables. So maybe I misunderstand the question here. But you do have the option today. Let me just clarify that then. So you can create a publication and there can be more information in the publication itself that actually ends up in the outputs. And you can filter out and set the context. So for example, you have an instruction that is valid for several products. You can put a placeholder there, which we call a variant sorry, a variable. And then when you publish, you can select which variable should be true, and it actually changes the information in the information that goes out. So in a way, this is possible. All right. Thank you. This is a quick one from Paul. Do you plan long term to deprecate the existing editor? So let me just say yes, but we're not in a rush, right? I think we fully respect that we have many long time users of the old editor and we won't rush this. We will obviously work towards having all the functionality in there over time move over to the new one. And when we are at a stage where we feel like we have all the things covered in the new one, then we can start looking at this. But you don't need to feel any rush of this. But at some point, it doesn't make sense to kinda have two editors at the same time. Right? So yes, but not in a rush. Yeah. And also just to give you a more perspective, Paul here, I think it's important that, you know, for for all of you as a user to understand, you know, the current editor has done wonders for us for the last ten years. Right? And and and I know that many of you still love the current editor. You need to remember it has been built upon on a technology stack, which is very difficult to catch up if you really want to release new and cool functionality for you for for you to actually get more out of Paligo. And this is why we have taken a decision to really move to a to a new next gen editor with new technology stack, with the new possibilities that could we can offer to you. So this is the reason we have moved into that. And as Tommy said, we are not in a rush, but you will start to see that over the over the coming period, we will start to make it more feature parity with our current editor. Yeah, maybe one comment actually for me as well is the AI assistant we talked about today, that would, for example, not be possible for us to actually build in the old editor because of the old foundation and the legacy that Raul talked about, right? So for all of this powerful in context help, we actually need also to create a new foundation, which is part of the reason we also built the new editor. But one thing you will see is us moving all the different types of users that we have today to the same environment. So you we have reviewers who can work with review assignments and that's something that you'll see us do as well to kind of converge everyone to one same environment. Thank you very much. Okay, we have a really important question, I think, now from Cecil. Some of us are in confidential environments where AI confidentiality is an issue. Some sell products to environments where products can be banned as supply chain threats. Is there a way to guarantee to our clients that we're not using prohibited products? So let me just read it again. This is a way to guarantee. So by prohibited, I guess there is some lists and tools you're not allowed to use. That's what I would assume. Yeah. Like, you you know, you're not allowed to have your training models and things like that, reading confidential content and so on. Yeah. So, I mean, we are working very much on on the security aspect of this. So we have a lot of ongoing project because all of this obviously needs to be done very responsible, right? So this is on top of our mind and a high priority for us as well. So we are very thorough on who will let in and we're not it's about the customer data as well. It's not something that, you know, we're we're sharing that sense. But I really will think maybe you have also some comments on this. I can see you waiting to chime in here. No. No. No. Absolutely. So I think this is a really great question, Sasol. So so one, actually, so I can completely understand where you come from because, you know, in my previous job, I come from a security service industry where I can completely understand the supply chain threats, right, from their perspective, and also make sure that your sensitive information is not leaked for model training. So there are a few things I can tell you, number one. Right? So number one, our current product, Colligo, you know, is a multi tenant product. That means we actually create a simple, you know, a unique tenant for yourself. That's one, which is hosted on AWS. So we we we know we have all of that more or less making sure that we don't collude your information with with other other customers, other data, and all of that as well. Second thing is that the the LLMs that we're gonna use for AI, specifically, now in this case, OpenAI for for AI assistant, we are not allowing them to train their model on your content. It's very, very clear. This is there as a part of our contract. We'll make sure that your content is your responsibility, meaning that is also meaning that you actually own your content. It sits with you in your own tenant. Even Palico doesn't actually can directly access your your content as well without your permission. Right? So we generally believe that this is the foundation for a trustworthy AI. This is the foundation for responsible AI, and we actually will do whatever it requires to make sure that. Other thing you need to remember as well, we are, you know, we are based out of Stockholm, that means European Union. We also need to abide by both the GDPR clause, but also the upcoming EU AI act. Meaning that all the concerns that you have here will also be addressed by EU AI act simply because some of those requirements are very, very stringent when it comes to using your data to train model. So yes, we are very much well aware about it. At the same time, just need to remember, we are very well aware that many of you, many of our customers are also coming from a regulated industries as well. So we need to make sure that whatever choices we make on the product, we keep confidentiality up high on the agenda. I hope that answer your question, Thank you, Cecil. Next question is a quick one for Tommy, I think. We have a DeepL Pro account. Can we use that for the AI translations? No. Not at the moment. So you set up the integration in Polygon, and it goes through us. So but that said, if you have a glossary and all of that, you can export it and import it here, you can keep that. But the it goes through us. Okay. Lovely. Next question about markdown. Will the markdown change become compatible with tools like GitLab or behave in Polygo similar to GitLab? Let me see. So I think there's different flavors to markdown if that's, if I'm understanding the question correctly. And I think oh, I need to look it up. Sorry. I would I think there's like four main flavors. I cannot really remember which one they were we're using, but I don't think it should be a problem here. Yeah. But maybe maybe what we can do, Tommy, is that I also don't know the answer. So so, Mary, I think we will get back to you with, you know, which after checking with our team. Perfect. Always better to research the right answer than to to invent a wrong answer. Okay. Next from Robert. Does the new release improve the taxonomy feature within Paligo? So this release don't have anything related to the taxonomy. Okay. Nice and quick. Next one, does Polygon learn only from its users or are there any external training models involved as well? So this is on the AI, I presume. So I think right now, no, we don't do do that. So it's very limited. Right? I think, like Rose said, it's we we're very careful about this at the moment. So no. Yeah. And the answer also is that especially for the if you're talking about, Prashant, if you're talking about AI features, no, we don't even use your customer data to train our model today. So no, we don't learn anything right now because it's super important from an AI responsibility perspective. So what you see is that, of course, we have tuned our the AI assistant so that it works very well within Paligo for structural authoring, but we have not trained any model on your data. Now the question is, if you really want to do this down down the line, yes, Paligo can provide options and we will look at that, but it will still be on your demand with your consent. And I think for us, it's extremely important that we take that privacy of your data and your customer's data in a in a very responsible way. Absolutely. Next question is from Wendy. Will the new release help to take a vast amount of barely structured content into structure? This has always been a daunting idea for our team. Yeah. I think this is a great one, and I think this this is really the direction we wanna go. I think this initial release is the first release, obviously, for for the AI assistant. And right now, we are working on a topic level. So that's where you operate. But that said, I wouldn't exclude, you know, to increase this maybe on a document level as well in in the future. I would be happy to to get there. But, yeah, the first step is is topic level. Yeah. And just to add that, Wendy, it's a great question. All I can say is stay tuned. We are extremely passionate about the topic as well. So so that's what I can say. So, yes, we have we have focused on this. I can't say when, but but as I said earlier in my one of the one of the questions that Andy asked, we we care about it from ingestion to consumption and everything in between. That's what you're gonna see Paligo is gonna be delivering on over the period of time. So that means ingestion of, you know, barely structured content into structure. I think we, you know, we are passionate about that topic for sure. Next question is from Shraddha about AI translations. One, when AI applies translation, it retains all variables, conditions, etcetera, structured content. And two, how do we bring in our existing translation memory, not just the glossary? Okay. This is a really good one. So, yes, it translate the content as it is, so it shouldn't remove, you know, the anything in the content. But I think on the translation memory, I think this is a very interesting question because, typically, translations is very, very, very expensive, especially if you have a process where you have an external human reviewing everything, then the cost per translation is very, very high. And that actually creates this need for translation memory so that you first go and look for these perfect matches before you send it off to a human, right? But with this machine translation, the price per translation is actually so much better. So it actually removes the need for this because this is not an expensive way to translate compared to the others. So there's actually not a real need for translation memory when you're working with machine translations like this. Okay, great. Next question from Kyle. Will Poligo have any capacity to understand our specific style guides or terminology and help teams maintain consistency in writing using this information? It's like they're reading our mind here. Think now this is, we have so many cool ideas and this is actually something that some of our developers have played around a little bit with. So it's on the idea stage, but yes, stay tuned. We we have so many ideas. We just need to find a way to prioritize between them. Right? So but yes. Double the engineering team. Next from Martin. When adding a new chapter to the existing content, would the next Paleo AI version be able to do something like this? Analyze the existing content for context, take a draft or quick notes, and create and add a new chapter out of it automatically. So another cool idea. I mean, you can give me an email Martin and we can discuss this later on and we can maybe look at your use case. So I think we're open. As I said, this goes back to also how we work with the next with the editor, the new editor that we're creating, right? We're creating a tool that you guys are gonna use. So if you have some cool use cases, ping us and we'll digest it together and see if it's actually fits the DNA of Polygon and the journey we're gonna go ahead. But I think this is very much related to the direction we're looking at. So yeah, give me a call. We're getting loads of great ideas for what could be our next steps. So next one, Stephanie, can I use multiple glossaries and select which ones to use for which translation project? Oh, I think I need to circle back on this one. I don't know if we have multiple glossaries or or one. So, yes, I'm sorry for not answering these on the fly, but we need to circle back on this. Okey dokey. Another glossary question, how are you using glossary in Palego now and will this change in the future with AI? So this could be glossaries in terms of translations, but it could also be glossaries in terms of using a glossary in your content, right? And I think if you're referring to the glossaries translation, I think we probably cover that after the question was asked. But if you're talking about glossaries in the form of the documentation, right now we have elements of structure for that. But I think this ties into the direction we talked about. The AI system is about making the structure easier to generate. So yes, maybe it's already can create glossaries. I haven't tried it, but it's about making the AI system be able to support you more and more. And I think this could very much be something that AI system could support within the future. So yes. Okey dokey. Questions are still flying in. We're we'll we'll do as many as we can. When adding a new chapter to the existing oh, wait. Did we do this one already? Yes. We did. We did then. Okay. Excuse me. Let me clean up my my questions. There's so many. Here we go. Another idea. AI is very useful creating new content. We've been looking for AI solutions that can make updates and track changes mode to existing content. Is that something that we're looking into? Let me just read it again. Sorry. So track changes mode to okay. So I think if I understand this correct, it's the suggestions functionality that you're referring to, which we have this basically working like track changes. So let me just explain it to the audience. So it's something that we added a little bit a while ago. We're actually gonna rework it a little bit now with the new editor to improve it a little bit. But basically instead of writing things directly into the topics, you actually add it as a suggestion and someone else can approve it. So I think definitely we should look at that, that's a great idea. Certainly I can see a lot of use cases where people would like to work with chat changes, especially if you're trying to distribute the authoring within the organization, you might have someone that approves it. So yeah, I love the idea and we should look at this. Alrighty, next question. Do we really need to import a glossary or translation memory into Paligo if we want to use the new AI translator? Can't the AI use the preexisting translations as a basis? Okay. So you don't need to use the glossary, right? You could have it translate into the default words, but you cannot use the pre translation translations to teach it. I think it also goes a little bit into the responsibility that we talked about before. This would mean that we would actually train DeepL on your content and that's not something we kinda wanna do right now. So no, you need to have a glossary if you have a prefixed translation that you wanna default to for specific words. But if you can do this, maybe just talk about if we can do this in a responsible way, I think in the future, I think we should consider this. Can we connect the new editor with a terminology database for authoring support? So we don't have a terminology tool built in, but the editor runs into browser, right? So if you are working with something like acrolinx and all of those, you're very much likely that actually worked by default. So I haven't tested it myself, but I would assume that any let's say browser based terminology tool that you're already using could probably use with our new editor. Next, are there plans to let AI help writers to tag topics with metadata or filtering based on what is written by the writer? AI needs metadata and good naming, but maybe it can help us to add this consistently. A hundred percent agree with this one. This is a great question, Martin. I think this is pretty funny because I've seen external people doing this with our API. So in a way you could actually do it, but it would be on you to code it. So but that's something we wanna get away from. Right? And this is a great example for context where AI help. Right? So I really love this and yes, this is something that I think we should look at. Okay, are you guys okay to power through the final four questions or are you pressed for time? No, let's go ahead. All right, let's do it. Then the next question from Emma. Can we import style guide rules into Poligo AI so it learns how we write content? I think we had a similar question about this before where we actually had some experiments here at the office with this. So it's not a fully ready feature out there. I think we have so many cool ideas actually is showing here. A lot of the question we had, we actually had discussions on internally. And I think we will need to prioritize amongst all of this, but trust us, we will find the highest impact things and help roll this out. And if you have ideas, you can just email me or Rahul, anyone and you give us your ideas and we promise that we will consider it. Great. And one more from Emma, can API keys be available to more than just admins so more users can use Cloud Code with Polygon? Yeah, I think that we need to circle back on this one. I think there are some other reasons for this as well that I need to verify before I go back on this one. So we need to circle back on this. Sorry. Yep. That's fair. And next we have from Chu. Are there plans to develop a feature that allows automatic side by side version comparisons in publications? So I think you do have some functionality with the timeline. So if you are using our snapshots, you can actually So I'll give you a use case. Let's say that you are about to send something out for review and you create a snapshot of the content, right? And then someone changes it or to make Sorry, not review. You want to submit matter expert to tweak then you get it back. You can actually do that diffing already side by side. So this is actually possible, but we are looking at different options for versioning as well. So it's very much, very possible that we will do some tweaks and improvements on this as well. But it is possible today. Okey dokey. Follow-up question on the taxonomies. Taxonomies are pretty critical for scale. Is Paligo looking into a feature that would allow us to build a publication based on taxonomy selections? Ah, I like this. So this is I mean, yes. I think this will be cool to have. This has also been raised as internally, right? Brainstorming. So I think this is good. I I love this idea. I cannot give you a timeline on this, but but yes. It's a great idea, Katelyn, for sure. Yeah. All the good ideas. And this is our our final question. So a follow-up from Chew. Part of the publication because we're a certification scheme, we need to publish it for transparency purposes. Okay. So that was a a follow-up on the context from the previous question. So then, in that case, thank you everybody. It's been really amazing to have this session and really appreciate all the questions. I hope you enjoyed this. You'll receive the recording in the next couple of days. Don't forget to go to paligo dot net to learn more about us if you are not a customer of ours already and learn more about how Paligo will become the platform for structured truth for the enterprise. Goodbye, and we'll see you in the next session. Thank you, Tommy and Rahul. Thank you.